DODCalls Anthropic’s ‘Red Lines’ a National Security Risk

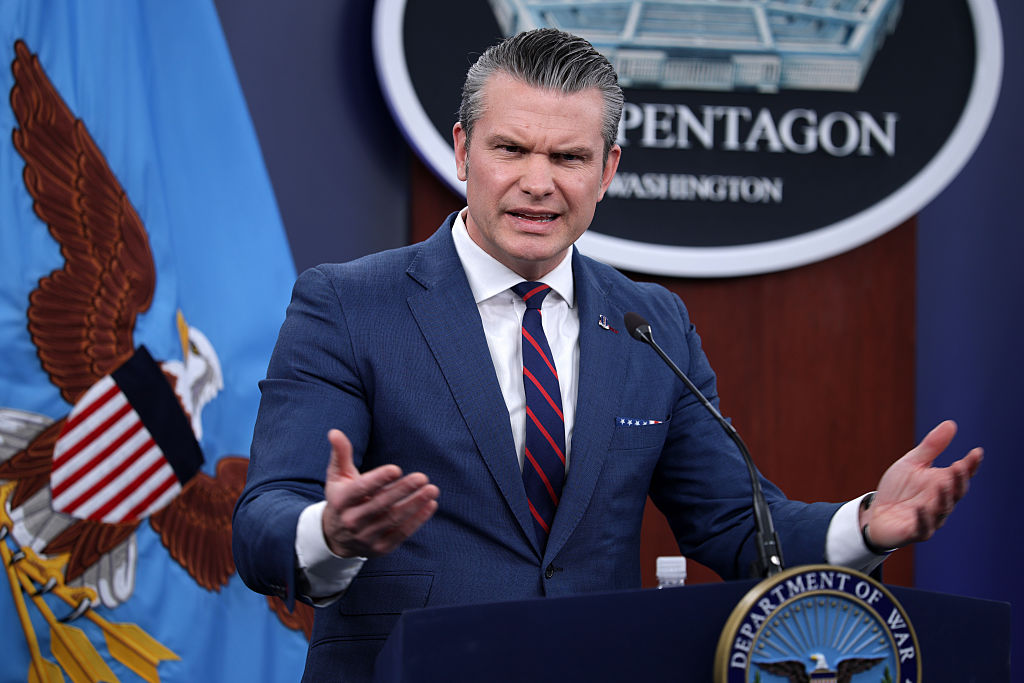

The Pentagon warns that Anthropic’s safety guardrails cross a dangerous line, threatening U.S. national security.

The Department of Defense has issued a stark warning that Anthropic’s newly disclosed “red lines” constitute an “unacceptable risk to national security.” This statement, made public in a recent Pentagon briefing, underscores growing concern that the AI firm’s safety mechanisms—designed to prevent the generation of disallowed content—may inadvertently expose sensitive defense capabilities. In an era where AI influences everything from logistics to battlefield decision‑making, the stakes have never been higher, and the Pentagon’s assessment highlights a critical tension between innovation speed and security rigor.

Anthropic’s red lines refer to internal thresholds that trigger the model to refuse or heavily moderate requests deemed unsafe, such as instructions for weaponization, disinformation campaigns, or the extraction of classified‑type information. These safeguards are built into the model’s training pipeline and are meant to protect public welfare, but the Pentagon argues that their breadth and opacity create uncertainty for military planners who rely on predictable AI behavior.

The core of the DOD’s objection lies in the potential for indirect compromise. Even if Anthropic’s system blocks overtly harmful prompts, adversaries could exploit edge cases—subtle phrasing, indirect context, or third‑party prompting—to coax the model into revealing strategic insights or facilitating covert operations. Such latent pathways could enable the rapid diffusion of tactics that threaten troop safety, critical infrastructure, or diplomatic stability, thereby turning a protective feature into a security vector.

If the Pentagon deems these safeguards unacceptable, it may delay or restructure AI integration across weapon systems, simulation environments, and autonomous decision‑making tools. Defense agencies typically demand rigorous, verifiable controls, and Anthropic’s proprietary approach—while innovative—lacks the transparent audit trails required for compliance with DoD standards such as the Risk Management Framework. Consequently, procurement timelines could lengthen, and collaborative research partnerships may be reevaluated to align with stricter governance requirements.

Stakeholders should pursue a balanced pathway: engage Anthropic in transparent testing, request detailed red‑line documentation, and develop supplemental safeguards tailored to military use cases. An independent oversight board, incorporating experts from defense, AI ethics, and cybersecurity, could assess risk levels and certify models for deployment. By doing so, the U.S. can harness the productivity gains of advanced language models while preserving the integrity of national security.

To mitigate the Pentagon’s concerns, Anthropic must provide granular transparency into how its red lines are defined and enforced. This includes publishing detailed methodology for threshold calibration, offering controlled API access for validation, and allowing third‑party auditors to review logging artifacts that record every refusal event. By integrating cryptographic attestation of model versions, implementing real‑time monitoring dashboards, and ensuring immutable audit trails that cannot be altered after deployment, the firm can demonstrate that safety mechanisms do not inadvertently leak sensitive patterns while preserving the confidentiality of defense‑relevant data. Regular red‑team exercises should simulate adversarial prompt campaigns to verify refusal logic under pressure, and any identified gaps must trigger immediate model updates and transparent public disclosures.

Beyond U.S. borders, allies such as NATO members and Five Eyes partners are watching the debate closely, as their own defense AI programs rely on compatible safety frameworks. If the United States tightens scrutiny on Anthropic’s red lines, it could trigger reciprocal export controls, joint testing initiatives, or a coordinated standard‑setting effort through organizations like the IEEE and ISO. Conversely, failure to align could fragment the global AI security landscape, creating loopholes that adversarial nations might exploit to circumvent safeguards, and prompting joint exercises to harmonize practices across allied forces.

Defense leaders should adopt a tiered risk assessment that treats AI safety features as mission‑critical components rather than optional add‑ons. Integrating red‑line audits into existing certification processes, establishing clear escalation paths for false‑negative incidents, and funding independent research into adversarial prompt engineering will strengthen resilience. Moreover, fostering public‑private partnerships that include ethical AI scholars can bridge the gap between rapid model innovation and the deliberate, security‑focused pace required by the Department of Defense.

The Pentagon’s warning serves as a timely reminder that AI progress, while promising, must be anchored in robust security stewardship. As the defense community evaluates Anthropic’s red lines, the dialogue will shape policy, procurement, and the broader ecosystem of responsible AI. Policymakers, technology leaders and security analysts must act now to align AI safety with defense imperatives, establishing clear standards and collaborative oversight. Continued engagement will ensure that technological brilliance does not come at the expense of national safety, paving the way for secure, adaptive AI solutions in the years ahead.

No Comments