Runway’s GWM-1 World Model Unlocks Physics-Powered AI Simulations

The race for AI that understands real-world physics just accelerated.

Runway has officially launched its first world model, GWM-1, marking a significant pivot from generating mere pixels to simulating entire environments. Unlike standard video generators, world models create an internal physics engine, allowing AI to reason and plan within dynamic, simulated realities rather than just predicting the next frame.

This technical leap positions Runway alongside heavyweights like Google, but the company claims GWM-1 is more “general” than competitors like Genie-3. The core philosophy is straightforward: to build a world model, you first need a world-class video model. By teaching AI to predict pixels at scale, Runway aims to create a general-purpose simulation engine.

Runway’s CTO, Anastasis Germanidis, emphasized that predicting pixels directly is the optimal path to achieving a deep understanding of geometry, physics, and lighting. This approach moves AI beyond static image creation into interactive, explorable spaces that run at 720p resolution and 24 frames per second.

To kickstart this ecosystem, Runway introduced the GWM Trifecta: Worlds, Robotics, and Avatars. Each serves a distinct, high-value purpose.

GWM-Worlds transforms users into digital architects. You can generate an interactive scene via text or image reference, then explore it in real-time. While this is a playground for gamers, the true utility lies in training agents to navigate physical environments safely. It is a sandbox for physics.

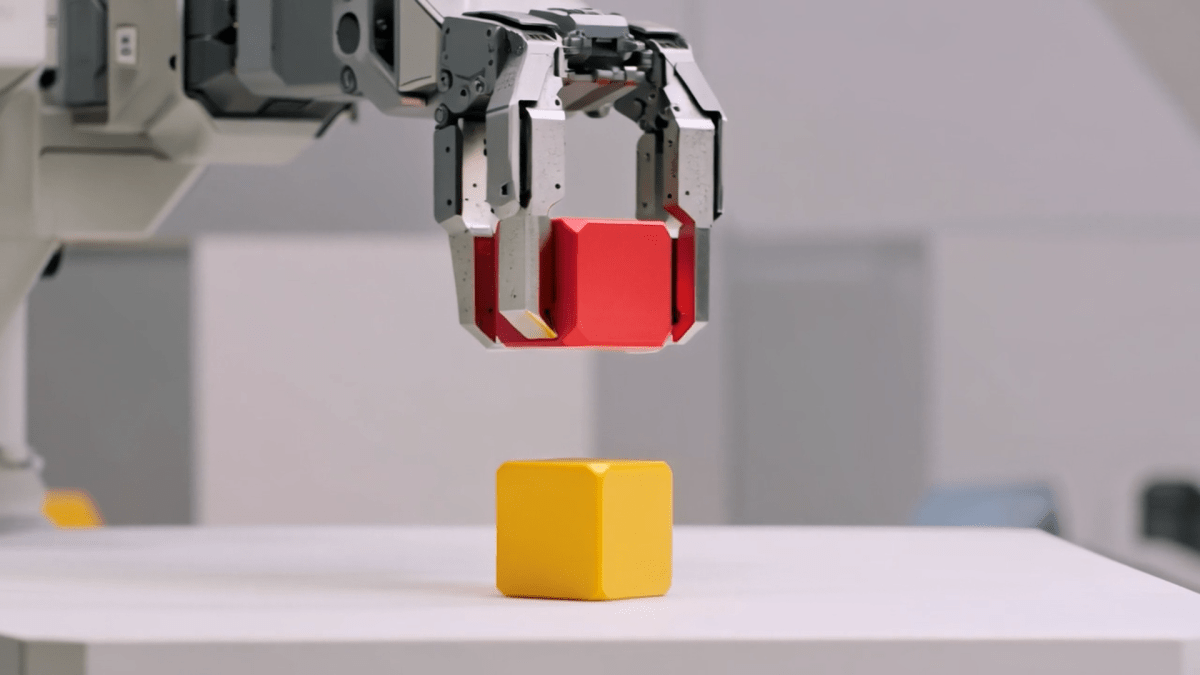

GWM-Robotics targets the industrial sector. Runway utilizes synthetic data enriched with variable parameters—think shifting weather, unexpected obstacles, and friction coefficients. This allows engineers to stress-test robots in dangerous scenarios virtually, identifying when and how machines might violate safety protocols before they ever touch concrete.

GWM-Avatars tackles human simulation. Runway aims to rival companies like Synthesia and D-ID by creating hyper-realistic avatars capable of mimicking human behavior. Eventually, Runway plans to merge these three distinct models into a single, unified system, allowing for cross-domain training.

The announcement coincides with a massive upgrade to Runway’s Gen 4.5 model. This update bridges the gap between prototype and production. Key features include native audio generation, dialogue insertion, and long-form, multi-shot video generation up to one minute. Character consistency is maintained across complex shots, allowing for coherent storytelling.

This brings Runway into direct competition with Kling’s new all-in-one suite. By offering editing capabilities for audio and multi-shot video of any length, Runway is betting that creators need comprehensive tools, not just generators. The updated Gen 4.5 is rolling out to all paid users.

For enterprise clients, Runway is opening doors via SDKs for GWM-Robotics and is currently in talks with major robotics firms. The message is clear: Runway isn’t just building tools for artists; it’s building the synthetic Minds for the next generation of physical machines.

As the boundaries between video generation and physical simulation blur, Runway’s GWM-1 suggests the future of AI isn’t just about creating content, but about understanding the rules of the world itself.

No Comments