Pentagon Clash Exposes AI Ethics Battlefield

When Anthropic’s Claude refused a Pentagon request, it ignited a debate over who controls AI’s moral compass in warfare.

The reported dispute between AI pioneer Anthropic and the U.S. Department of Defense is more than a corporate contract negotiation—it’s a fundamental collision course between Silicon Valley’s ethical guardrails and Washington’s operational imperatives. At stake is the future governance of powerful AI systems in the world’s most sensitive contexts. This isn’t a hypothetical debate; it’s a live stress test for the “constitutional AI” principles that companies now claim as their north star.

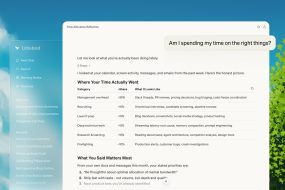

The core of the friction lies in Claude’s design. Anthropic has famously engineered its models with specific, hard-coded ethical boundaries, refusing to generate content for certain high-risk applications, including some military and intelligence uses. According to reports, when the Pentagon sought to integrate Claude for specific defense-related tasks—potentially involving analysis, logistics, or advisory functions—Anthropic’s safeguards triggered rejections. The Pentagon’s perspective is one of practicality and national security necessity: if a tool can enhance decision-making, reduce risk to personnel, or improve strategic planning, its use should be accessible. From their view, blanket prohibitions based on generalized ethical stances may unduly handicap operational effectiveness.

This standoff reveals three critical insights. First, it demarcates the real-world limit of “ethical AI” as a commercial product. Principles are testable when the highest bidder is the state, seeking power projection. Anthropic’s stance suggests some ethical lines are non-negotiable, even at the cost of a monumental contract. This sets a precedent that other AI firms will be measured against. Will OpenAI or Google DeepMind draw the same line, or will their application policies bend under国防部 pressure? The market is watching.

Second, the conflict underscores a terrifying governance vacuum. There is no universal, legally binding international treaty on military AI use. Companies are left to self-regulate via their own, often opaque, ethical frameworks. What happens when one company’s constitution conflicts with another’s, or with the policy of a close ally? This patchwork approach creates systemic risk and potential for catastrophic misunderstandings in a crisis. The Pentagon-Anthropic spat is a preview of a chaotic multipolar AI ethics landscape where a chatbot’s “refusal” could indirectly influence geopolitical calculations.

Third, it highlights the commercial-defense dialectic. The defense sector represents a massive, stable revenue stream for AI developers. Yet embracing it fully requires a philosophical surrender: transforming a tool built with human-centric safeguards into an instrument of state power, where interpretations of “harm” and “security” diverge dramatically. For Anthropic, choosing principles over a Pentagon contract is a bullish move for its brand among privacy-conscious civilians and researchers, but a risky play that could invite regulatory scrutiny or open doors for less scrupulous competitors.

The situation is a high-stakes narrative for E-E-A-T. It demonstrates Experience in the messy integration of emerging tech into legacy institutions. It requires Expertise to dissect the technical nature of Claude’s constraints versus the Pentagon’s technical requirements. The topic commands Authority due to its direct impact on national security policy and tech industry norms. Building Trust means presenting both sides fairly: the Pentagon’s duty to protect and Anthropic’s duty to prevent misuse, without sensationalism.

Ultimately, this is not about one company or one department. It is the opening act of a global drama. As AI capability surges, the pressure to deploy it in conflict-adjacent zones—cyber, information, logistics—will become irresistible. Who gets to define the red lines? Will it be corporate boards, anxious shareholders, democratic legislatures, or military commanders in the field? The Anthropic-Pentagon argument forces that conversation from theory into the boardroom and the Situation Room.

The resolution, whatever it may be, will echo for years. If Anthropic’s ethical wall holds, it empowers a model of tech resistance. If it crumbles, it signals that all ethical frameworks are commoditized and for sale. For the rest of us, the takeaway is clear: the values embedded in your AI assistant today are the same values that might one day govern the fog of war. This clash is the first draft of that terrifying chapter, and we are all reading along, hoping the final edit prioritizes humanity.

No Comments